Experiment 1 : Sound Pattern Interface

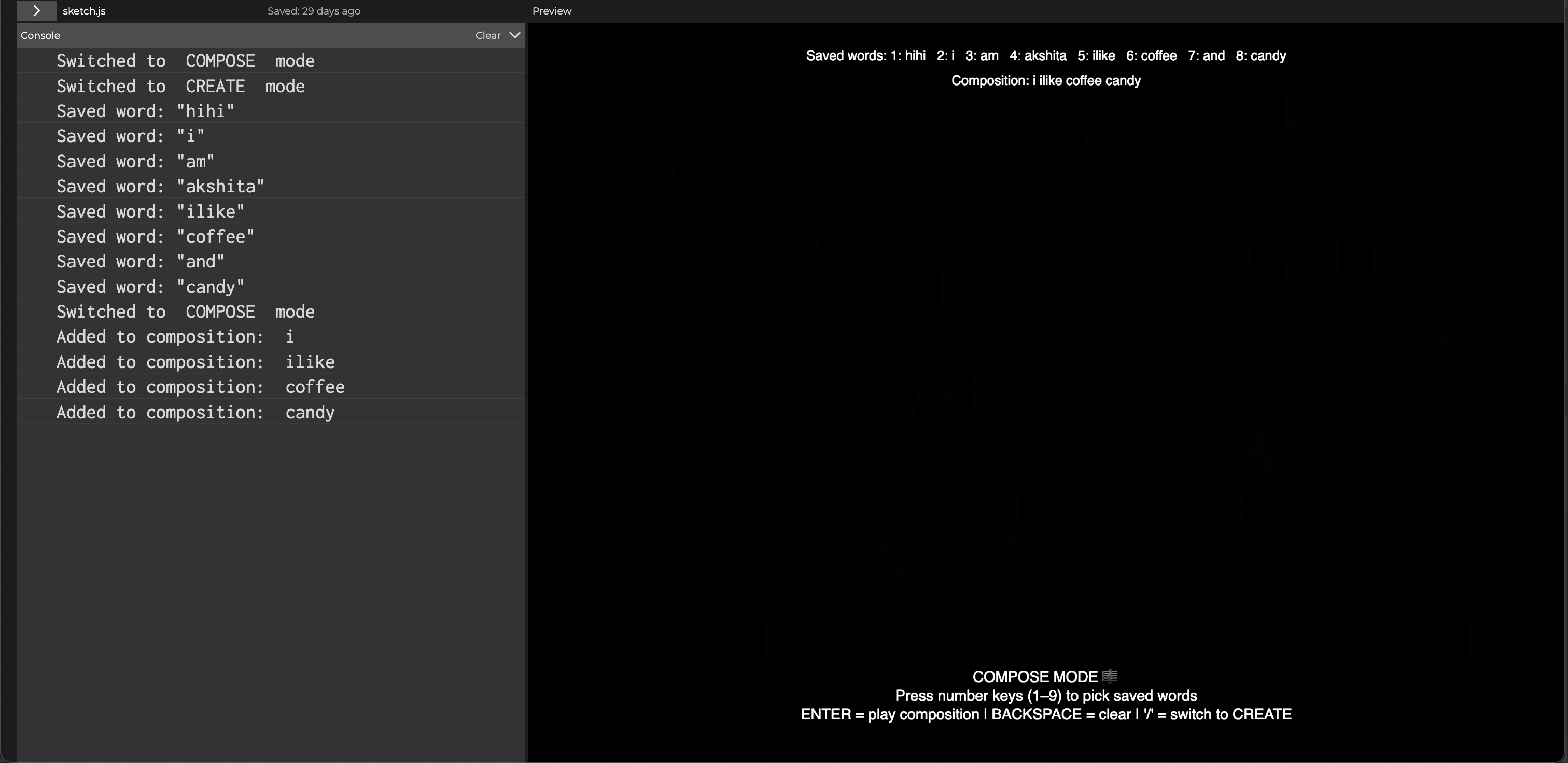

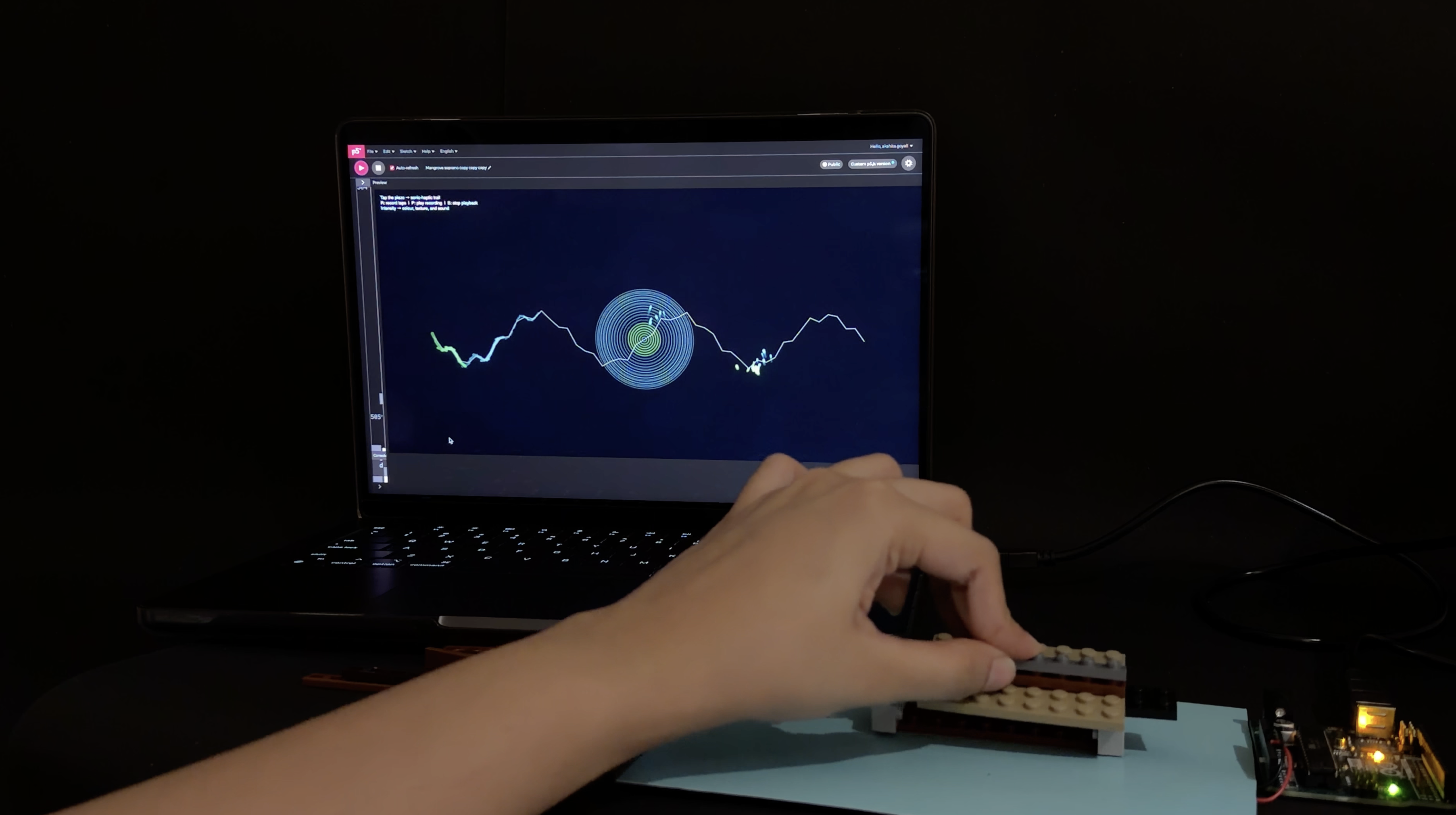

Sound Pattern Interface is a sound-reactive sketch built in p5.js that listens to live microphone input and translates it into three different

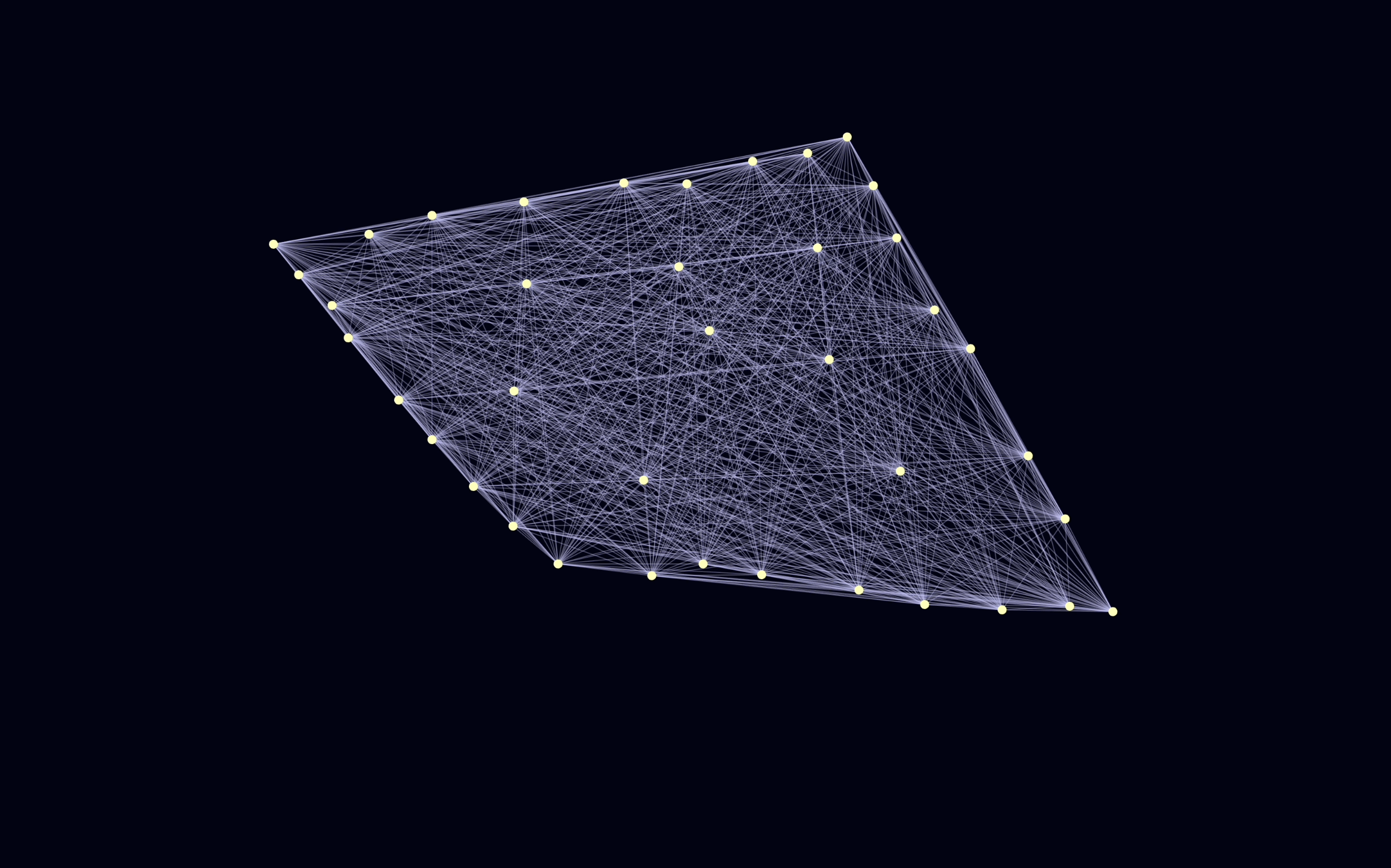

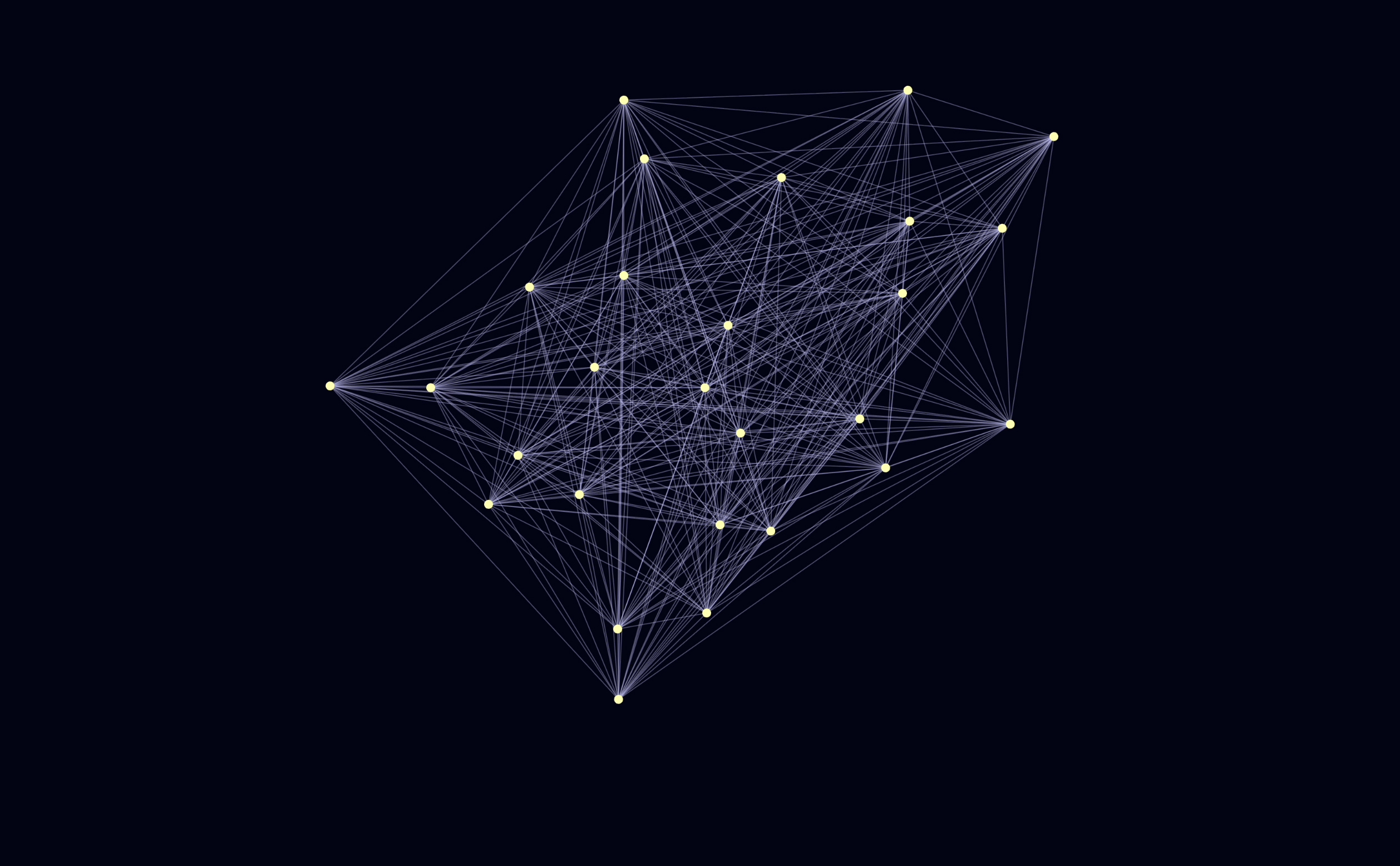

visual “modes”: a DNA-like helix, a drifting neural network, and travelling sound waves that sweep across the screen. Using FFT bands (bass, mids, treble), the system stretches,

compresses and brightens these forms in real time, so the canvas almost behaves like a reaction study space for sound energy.

The Sketch →